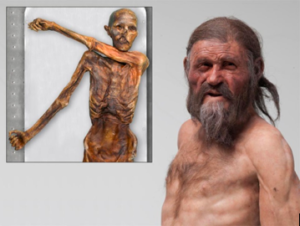

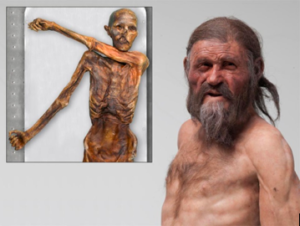

5,300 years ago in what is now the Oetzal Alps between Austria and Italy, a man that researchers today decided to name “Ötzi” lived and also met an untimely and suspicious death. His frozen and … [Read more...]

Nora Gedgaudas

by admin 7 Comments

5,300 years ago in what is now the Oetzal Alps between Austria and Italy, a man that researchers today decided to name “Ötzi” lived and also met an untimely and suspicious death. His frozen and … [Read more...]

by Nora 12 Comments

Gluten (the Latin word for “glue”), is a substance found in numerous grains. It is actually a complex of proteomes and lectins that are a part of most known grains, including even rice, however … [Read more...]

by Nora 5 Comments

Q: While I'm in the process of cutting out grains I'm curious about quinoa. Do you recommend cutting it out as well? And my other question is about the butter used in your nut ball snacker … [Read more...]